Last updated: March 2026

GitKraken Insights tracks eight AI Impact metrics that measure how AI coding tools affect code quality and developer efficiency. By tracking rework, duplication, and post-PR changes, teams can identify improvements in code and workflow and guide effective use of AI tools.

Plan: GitKraken Insights

Platform: Browser only via gitkraken.dev

Role: Lead, Admin, or Owner

Prerequisite: Connected AI provider (Claude Code, Cursor, or GitHub Copilot). See Getting Started.

| Metric | Group | Definition |

|---|---|---|

| Copy/Paste vs Moved % | Code quality impact | Duplicated vs refactored code over time |

| Duplicated Code | Code quality impact | Lines in duplicate blocks detected |

| Percent of Code Rework | Code quality impact | Recently written code modified again |

| Post PR Work Occurring | Code quality impact | Follow-up work and fixes after merge |

| Active Users | AI adoption | Unique users active in connected AI providers |

| Suggestions | AI adoption | AI-generated suggestions offered (by total lines) |

| Prompt Acceptance Rate | AI adoption | % of prompt suggestions accepted |

| Tab Acceptance Rate | AI adoption | % of tab suggestions accepted |

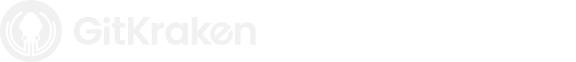

Copy/Paste vs Moved Percent

Definition: Copy/Paste vs Moved Percent compares how much code is duplicated versus refactored or relocated over time. Moved lines reflect healthy code reorganization, while copy/pasted lines often signal duplicated logic that can lead to technical debt.

Tracking this metric helps teams distinguish between maintainable refactoring and potentially problematic duplication. This is especially important for teams using AI coding assistants, which tend to duplicate code rather than abstract or reuse it, leading to higher long-term maintenance costs if left unchecked.

You can hover over points on the chart to view the exact percentages for a specific time period, making it easy to see changes before and after implementing an AI coding tool.

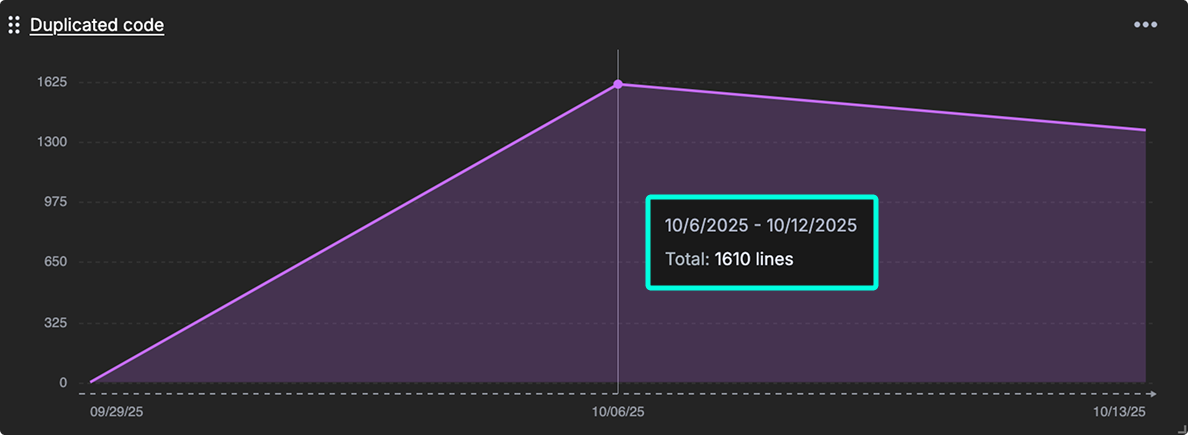

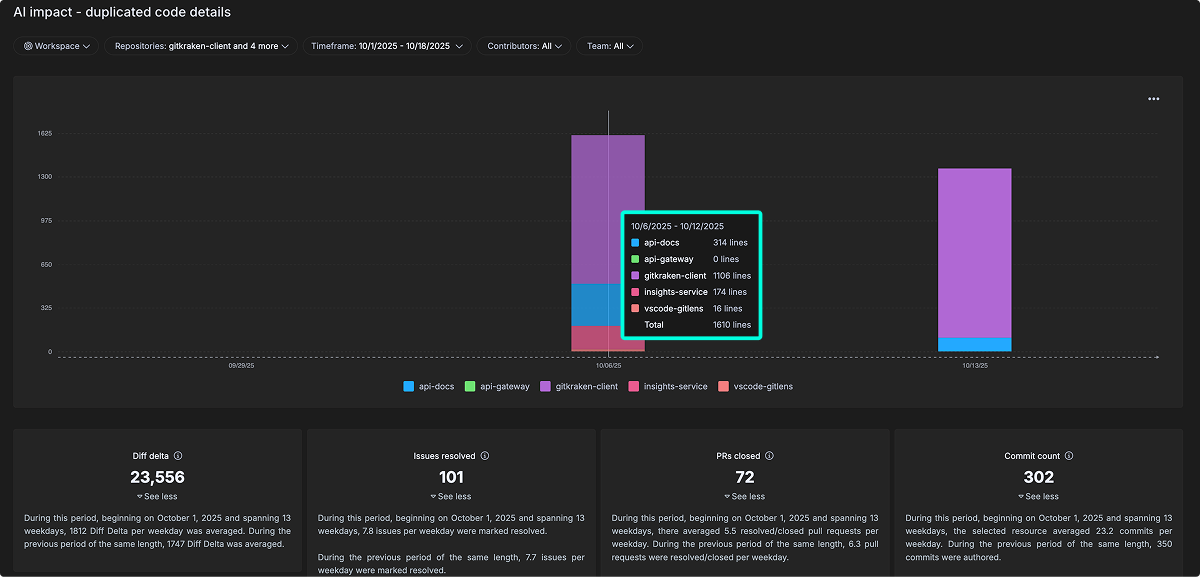

Duplicated Code

Definition: The amount of lines in duplicate blocks detected.

Duplicated Code measures redundant code blocks in your codebase. It correlates with increased maintenance costs and a higher risk of defect propagation, since duplicated logic must be updated consistently across multiple places.

When duplication rises, it often signals that AI-assisted or manual coding practices are reusing code without enough refactoring.

The detailed view breaks this down by repository and time period, showing where duplication is concentrated and how it changes alongside overall development activity, such as commits, pull requests, and issues resolved. This helps teams connect code duplication trends to broader workflow patterns and assess the real impact of AI tools on code quality.

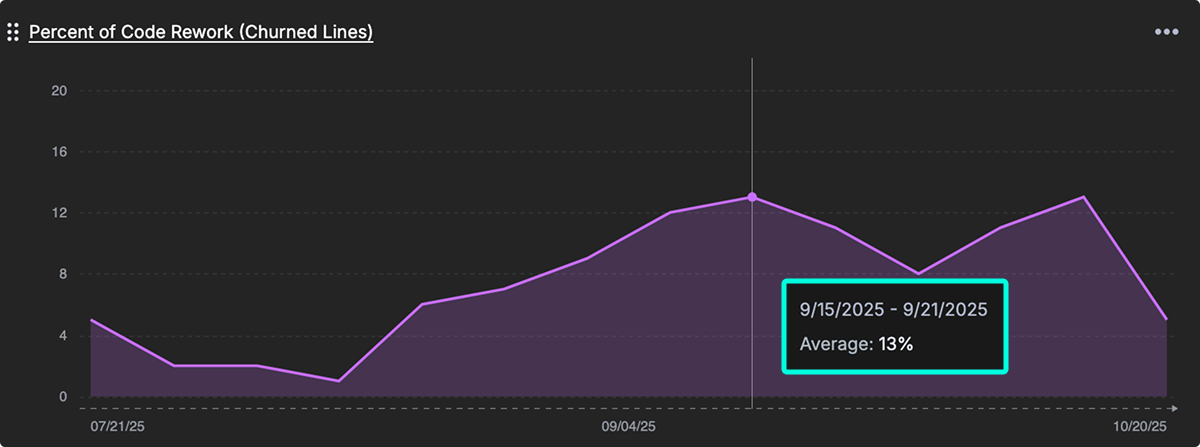

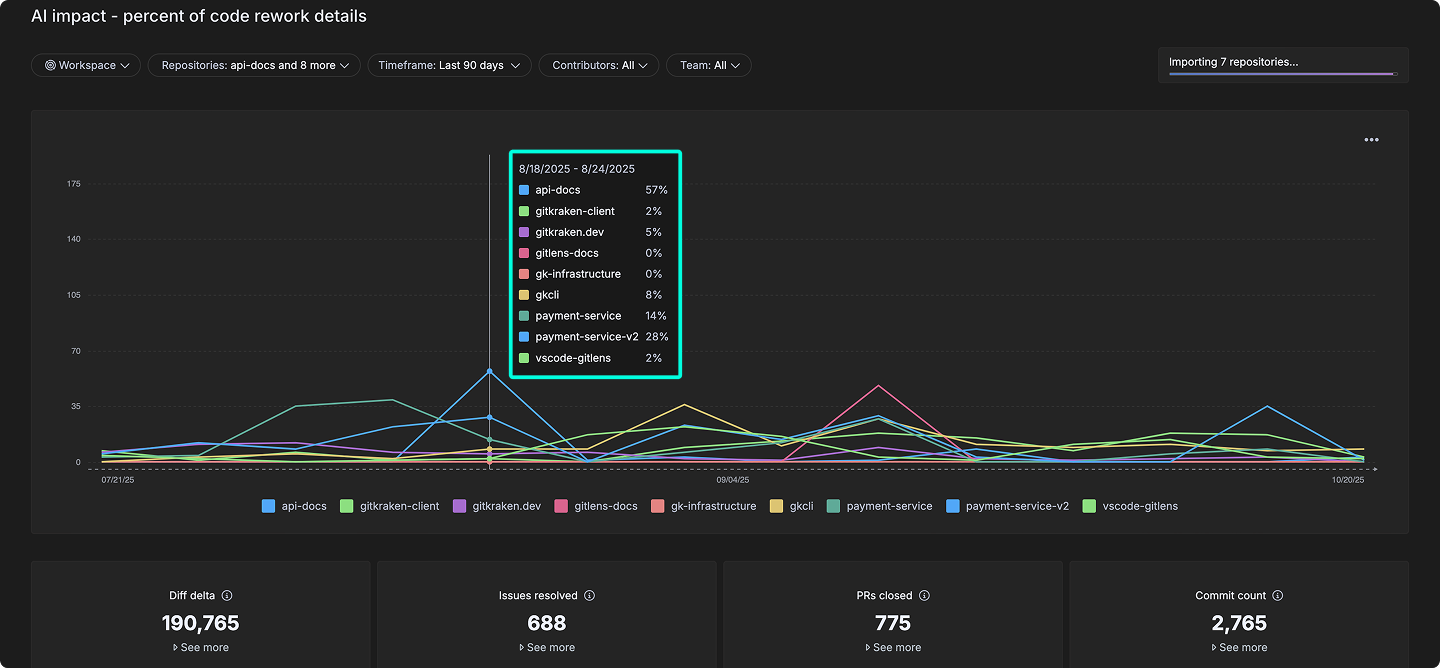

Percent of Code Rework (Churned Lines)

Definition: The percentage of recently written code that gets modified again quickly, which may indicate instability or changing requirements.

Percent of Code Rework (Churned Lines) calculates how much recently written code gets modified again, signaling instability, shifting requirements, or potential quality issues. High churn rates can reflect rework caused by unclear goals, rushed reviews, or limitations in AI-assisted code generation.

The detailed view breaks this down across repositories and time periods, helping teams see where rework is concentrated and how it aligns with activity levels like commits, pull requests, and issue resolutions. By monitoring this metric, teams can assess whether AI tools are improving long-term code quality or introducing avoidable rework.

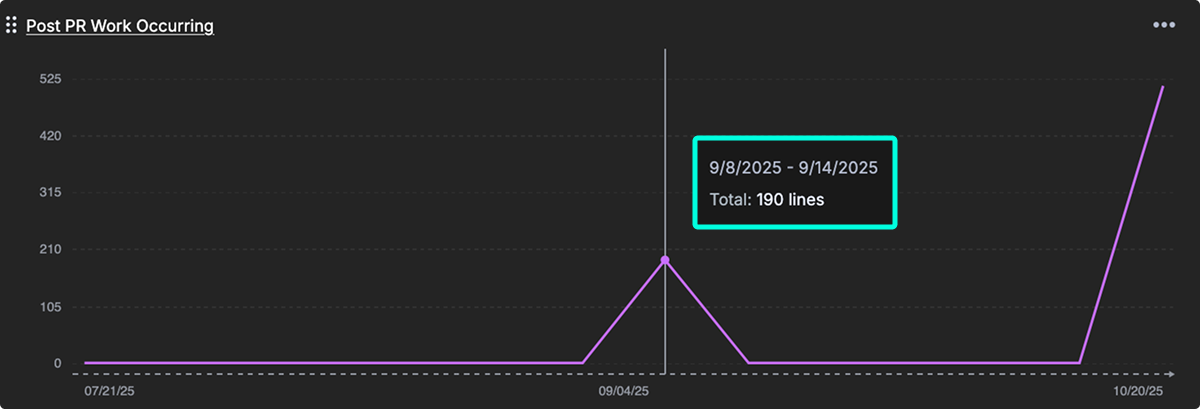

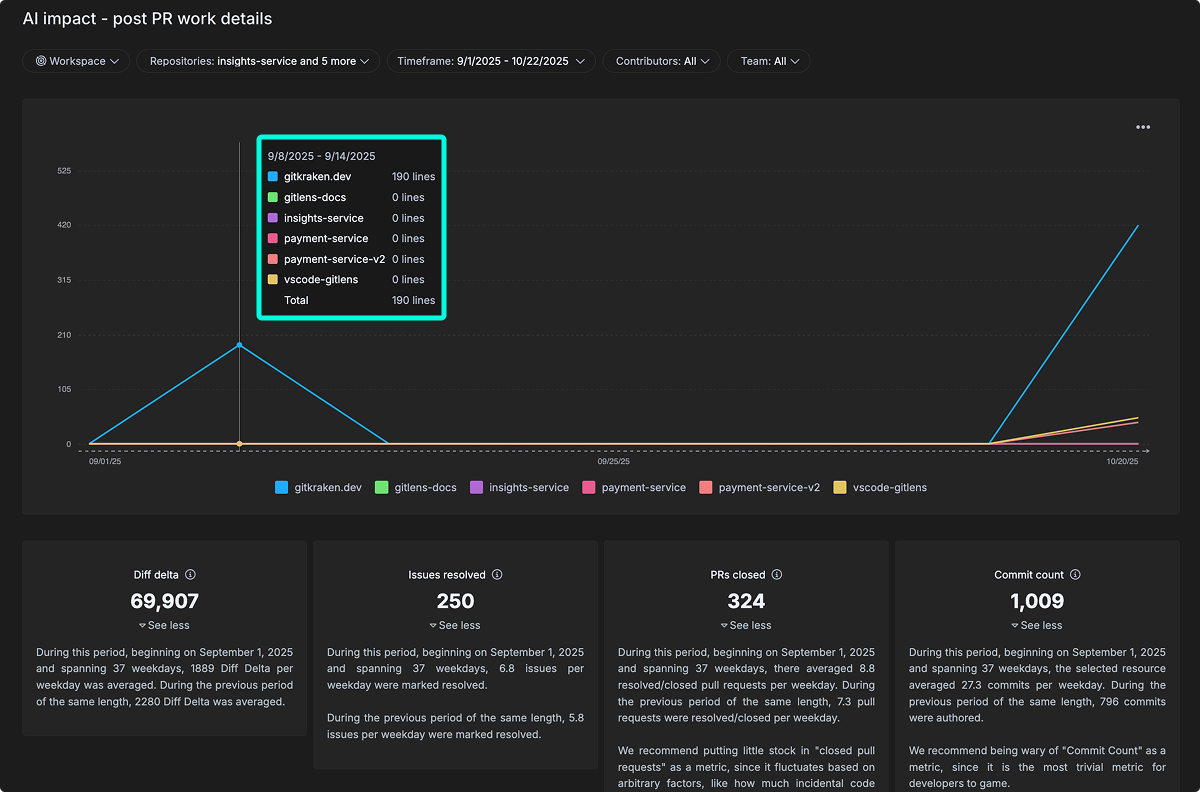

Post PR Work Occurring

Definition: Follow-up work and bug fixes needed after merging, indicating the initial quality of AI-assisted code.

Post PR Work Occurring quantifies rework and fixes needed after a pull request is merged, providing an early signal of code quality. It is especially useful when evaluating AI-assisted development, where code may pass initial review but still require corrections post-merge.

The detailed view breaks this activity down by repository and time period, revealing patterns in post-merge changes and how they relate to broader development activity, such as commits and pull requests. Tracking this metric over time helps teams improve review quality and identify whether AI-assisted coding leads to more or less post-merge rework.

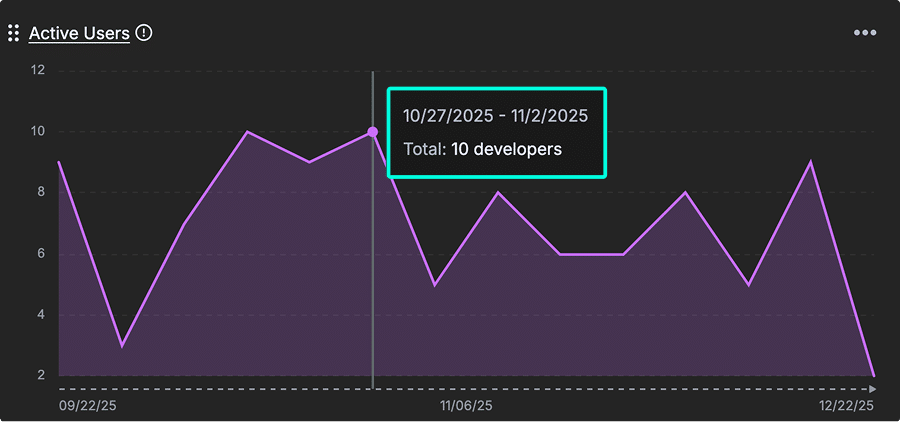

Active Users

Definition: The total count of unique users active in the connected AI provider integrations.

Active Users counts developers actively using AI coding assistants from connected providers. It establishes a baseline for tracking AI adoption across your teams and supports analysis of AI tool investment impact and developer productivity trends.

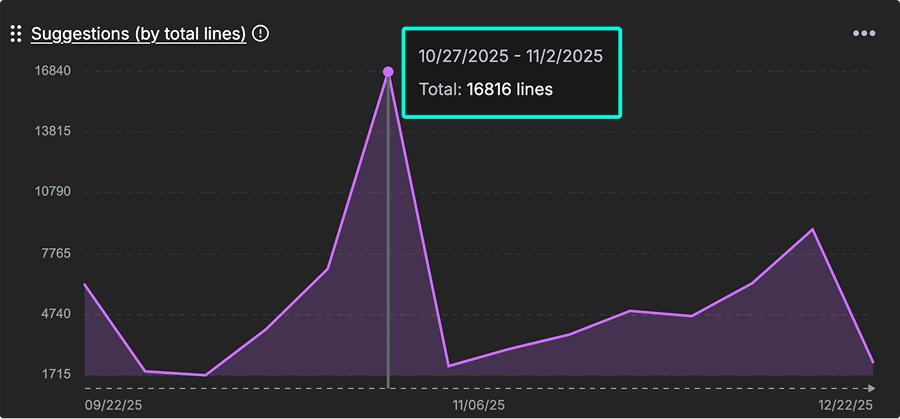

Suggestions (by total lines)

Definition: The number of suggestions offered from your connected AI provider integrations.

Suggestions (by total lines) tracks the volume of AI-generated code suggestions offered to developers. It provides scale context for measuring the extent of AI contribution to overall development output and helps teams evaluate how heavily AI is being leveraged in the coding process.

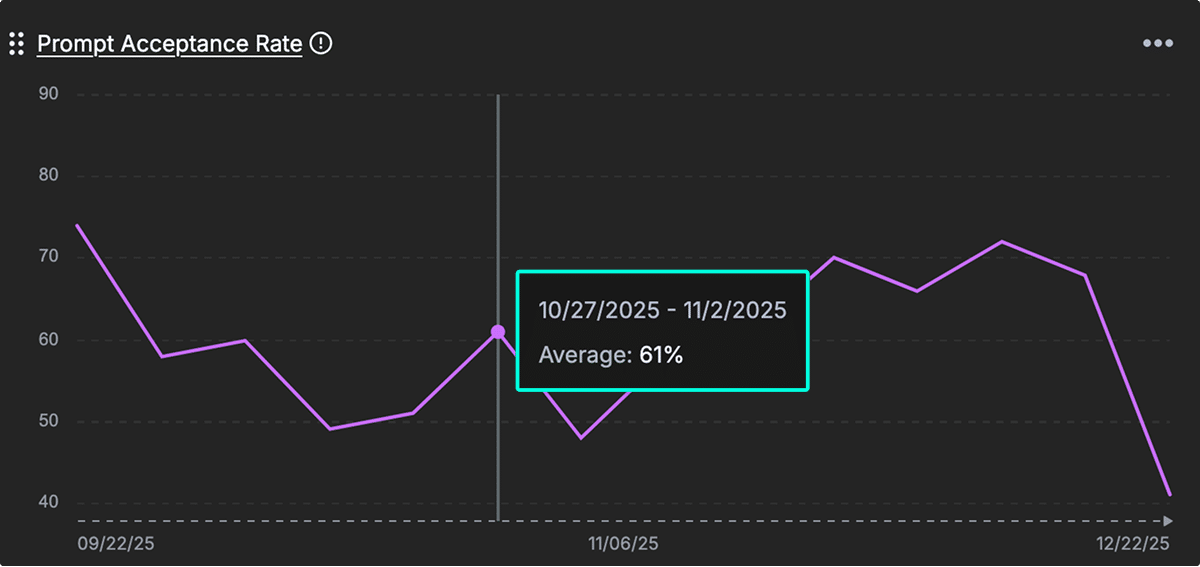

Prompt Acceptance Rate

Definition: The percentage of prompt results a developer accepted from your connected AI provider integrations.

Prompt Acceptance Rate measures how many AI-generated code suggestions are accepted by developers. A higher rate indicates stronger alignment between AI outputs and developer expectations, signaling trust and effectiveness in AI-assisted workflows.

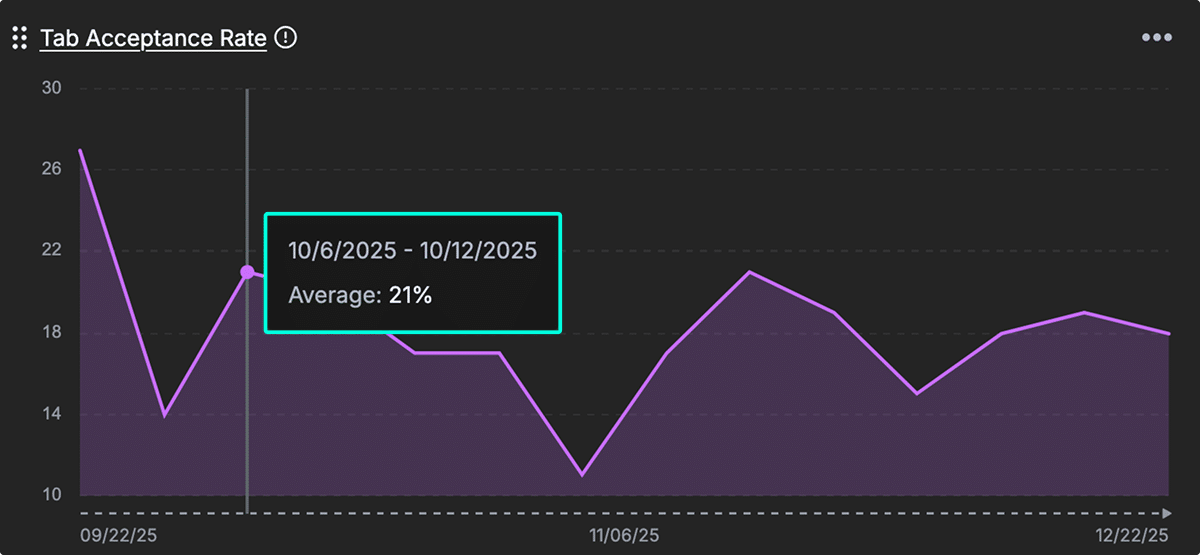

Tab Acceptance Rate

Definition: Measures what percentage of AI-generated code suggestions developers accept with tabs.

Like prompt acceptance, this metric reflects the effectiveness and usability of AI suggestions. A higher tab acceptance rate indicates that developers find the suggestions useful and frictionless to apply, helping gauge the seamlessness of AI integration in development workflows.